I wrote previously about how AI agents are like an infinite queue of junior devs — eager, confident, zero context, summarily executed before they learn anything. That piece was about the problem. This one is about what I'm actually doing about it.

I've been building an e-commerce stack (Stoa) as a side project — Medusa, Odoo, React Router, dbt, the works. It's a real codebase with real complexity, not a demo. Over the past several months I've been developing a workflow system for working with Claude Code on it, borrowing heavily from the OpenSpec, Superpowers, and Get Shit Done frameworks, plus a fair amount of my own rules and guidance. What follows isn't a product or a framework — it's a frankenstein'd amalgam of ideas that I'm actively using and iterating on.

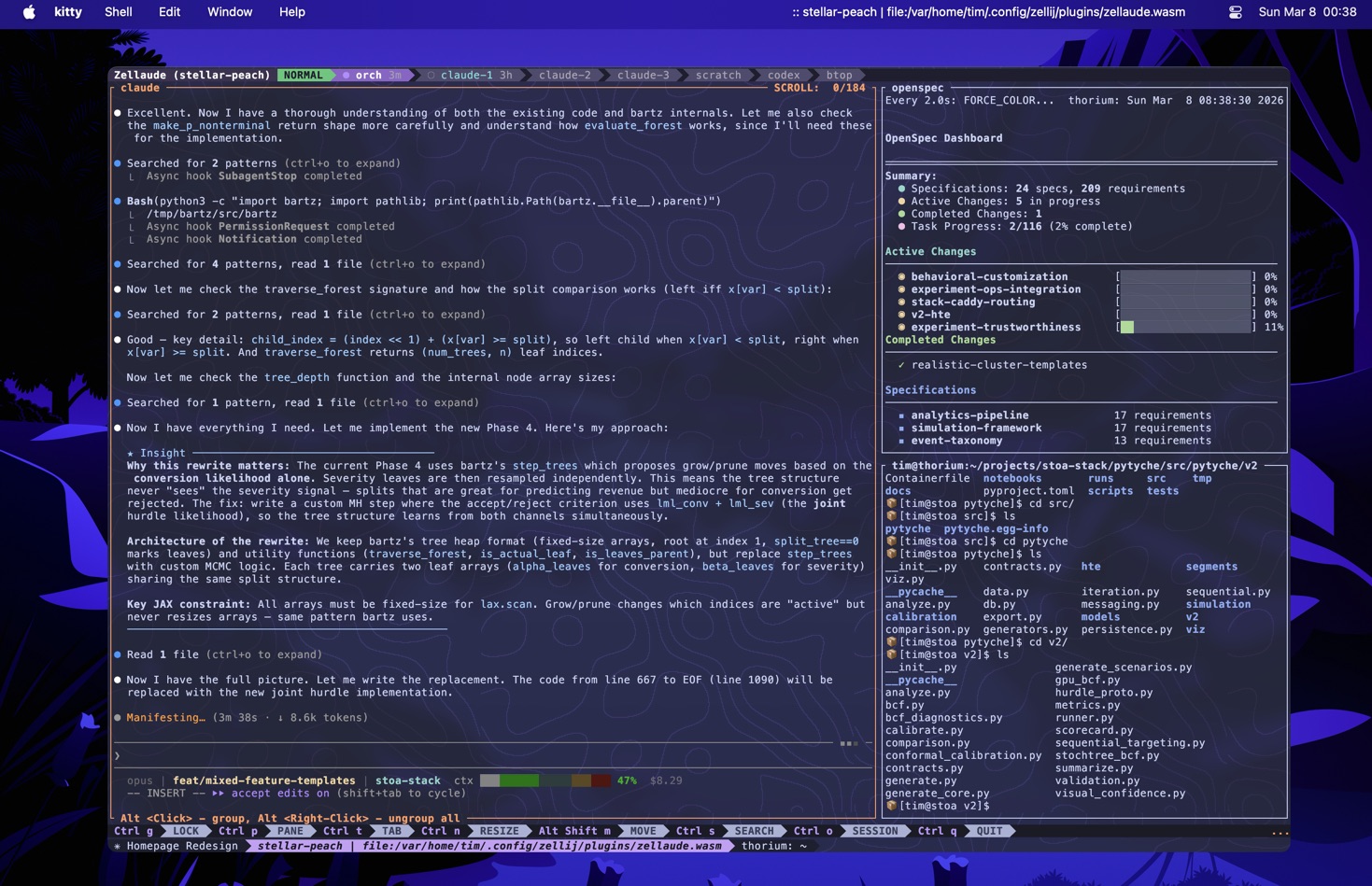

The shape of the system

The system has three layers:

A spec-driven workflow engine — Changes to the codebase go through a structured lifecycle: proposal → specs → design → tasks → implementation → verification → archive. Each stage produces artifacts that constrain the next. The OpenSpec CLI manages the artifact graph and dependency ordering.

An orchestrator/subagent pattern — Claude Code (Opus) acts as a thin orchestrator: it reads compressed context, dispatches Sonnet subagents for implementation, and runs review passes. Subagents get fresh context windows, scoped instructions, and strict boundaries. The orchestrator never implements — it dispatches and reviews.

Hooks for state and signals — Shell scripts that fire on session events and tool use, injecting context and surfacing warnings. These are informational — nudges, not enforcement.

None of these ideas are novel individually. The value, to the extent there is any, is in how they compose — and in the specific rules that make the system work in practice.

Thinking before building

Before any structured workflow, there's usually a /opsx:explore phase — an open-ended thinking mode where the agent acts as a conversation partner rather than an implementer. Explore mode is explicitly forbidden from writing code. It can read the codebase, draw ASCII diagrams, compare approaches, investigate problems, and ask sharpening questions — but it cannot implement. This constraint is the point.

Most of my changes start fuzzy. I know roughly what I want but haven't thought through the edges. Explore mode is where I work through that: what are the integration points, what does the existing code look like, what are the tradeoffs between approaches. If I mention a GitLab issue, it reads the issue body and uses that as a starting point. If there's an active OpenSpec change, it reads the existing artifacts for context.

When thinking crystallizes into something concrete, explore mode offers to transition — "This feels solid enough to start a change. Want me to create one?" But there's no pressure to formalize. Sometimes the thinking is the value.

I use Claude's built-in plan mode heavily during the design phase too. The combination — explore for open-ended investigation, plan mode for working through implementation details — means that by the time I'm writing specs and tasks, most of the hard thinking is already done.

The workflow lifecycle

Everything starts with a slash command. Claude Code skills are markdown files that define multi-step workflows, and I've built a set of them around the OpenSpec lifecycle:

/opsx:new <name> creates a change directory, shows the first artifact template, and stops. It hands control back to me. I then use /opsx:continue to step through artifacts one at a time — proposal, then specs, then design, then tasks — steering each one. This deliberate pace is what I use for most changes, especially anything with real design decisions. Each artifact gets my attention before the next one starts.

/opsx:ff <name> is the fast-forward alternative — it generates all artifacts in one pass, asking clarifying questions along the way. Useful when the change is well-understood and I just want to get to implementation. But it often moves too fast for anything that requires real thought about the design, and I find myself going back to fix artifacts that would have been right the first time with the deliberate approach.

The artifacts aren't bureaucracy for its own sake. Each one constrains the next stage in ways that compensate for specific LLM failure modes:

- The proposal forces articulation of why before what. Without this, you get solutions to the wrong problem.

- Delta specs define behavioral contracts with requirements and scenarios. These become the review checklist later — the subagent's output gets verified against them line by line.

- The design captures architectural decisions and file-level scope. This is what prevents the "refactor everything" impulse.

- Tasks are broken into TDD-phased groups: write failing tests, then implement. This is structural enforcement of test-driven development — the task ordering itself is the discipline.

After artifacts are created, a context brief gets written — a compressed ~2K token summary of the whole artifact chain. This is the key to keeping the orchestrator thin. The orchestrator reads the brief and tasks; the subagent reads the full specs and design from disk in its own fresh context window.

The orchestrator

/opsx:apply is where implementation happens. The orchestrator reads the context brief, scans remaining tasks for ambiguity (asking me questions if anything's unclear), then dispatches subagents.

Each subagent gets:

- The full text of its task (pasted, not referenced)

- Pointers to spec and design files (which it reads itself)

- File paths to modify

- A reference to

CLAUDE-AGENT.md— the subagent instruction set

The dispatch prompt is explicit about boundaries: don't change files outside your scope, don't add features beyond the task, don't refactor neighboring code. And critically: if you hit ambiguity the spec doesn't answer, stop and return a structured question rather than guessing.

QUESTION:

question: "Should the discount apply before or after tax?"

context: "Spec says 'apply discount to order total' but doesn't specify pre/post tax"

options: ["Before tax", "After tax"]

completed_so_far: ["Cart total calculation", "Discount validation"]

blocking: "Discount application logic"

The orchestrator surfaces these to me via an interactive prompt. A quick answer here saves significant rework downstream.

After each task, two mandatory review passes:

Spec compliance — A separate Sonnet subagent reads the task requirements and the actual code (not the implementer's report of what they built — the actual files). It checks for missing requirements, phantom implementations, and spec divergence.

Code quality — Another subagent reviews for separation of concerns, testing anti-patterns, type safety, and architecture.

Both reviewers are told explicitly: do not trust the implementer's self-report. Read the code. This matters because LLMs are unreliable self-assessors — they'll claim success confidently even when the implementation is incomplete.

Every 2-3 tasks, the orchestrator commits the batch and updates the context brief. This means if the session hits context limits or I need to pick up tomorrow, the state is recoverable.

The subagent instruction set

CLAUDE-AGENT.md is the trimmed instruction file that subagents read. It's where most of the behavioral shaping lives. A few things that matter:

The Iron Law of TDD: No production code without a failing test first. If you write code before the test, delete it. Don't keep it as reference, don't adapt it. Delete means delete. Implement fresh from tests.

This sounds rigid, and it is. LLMs left to their own devices will write the implementation first and then write tests that pass against it — which means the tests are testing the implementation's behavior, not the specification's requirements. Structural enforcement via task ordering (test tasks before implementation tasks) is more reliable than prompting alone.

Deviation rules — a clear boundary between what the subagent can fix autonomously (bugs in code it just wrote, missing imports, its own broken tests) and what requires asking the orchestrator (new files outside scope, architectural changes, three failed attempts on the same issue). This prevents both the "ask permission for everything" paralysis and the "silently refactor half the codebase" ambition.

Evidence before claims: The subagent must run tests and paste actual output before claiming anything works. Strong framing matters here — LLMs are more compliant with verification requirements when the instruction set is blunt about it.

Hooks and real enforcement

Claude Code hooks are shell scripts that fire on session events and tool use. I use three:

Session state fires on startup, resume, and (critically) after context compaction. It injects git branch, working tree status, recent commits, and active OpenSpec changes. The compact trigger matters — when Claude Code auto-compresses the conversation, the agent loses orientation. This hook re-grounds it.

Context pressure runs after every tool use. It reads a sidecar JSON (written by the status bar) to check how full the orchestrator's context window is. In the yellow zone, it suggests entering plan mode. In the red zone, it tells the agent to stay concise and prefer dispatching to subagents. Debounced by zone transitions and a cooldown timer so it doesn't spam.

Time estimation uses a small Rust CLI to estimate duration for long-running tool invocations and flags that to the orchestrator, so it can plan around expensive operations rather than blocking on them.

These are useful signals, but they're not enforcement — hooks are advisory. An agent can ignore a warning. The real enforcement in my setup comes from a separate project, orch, which manages the execution environment itself. Orch layers three hard boundaries:

- Container mounts (podman-compose) — coarse isolation of what files exist in the execution environment at all.

- Bwrap namespaces — fine-grained, per-command filesystem scoping. An agent implementing a feature gets read-only access to tests but can't modify them; a separate deterministic step runs the tests against its output.

- MCP profiles — scoped tool availability per step. Different stages of the workflow get different capabilities.

A hook can tell an agent "don't modify tests." Orch makes them read-only in the agent's filesystem view.

Cross-model review

One more piece: /codex-review shells out to OpenAI's Codex CLI for model-diverse feedback. The script assembles context (CLAUDE.md, the context brief, specs, the diff) and runs a non-interactive review. The output goes to a markdown file in the change directory.

This isn't about one model being better than the other. It's about getting a second perspective from a model that hasn't been involved in the implementation. The review catches different things — different biases, different blind spots. It's the same reason you want a code reviewer who didn't write the code.

What a typical session looks like

me: /opsx:explore

claude: "What are you thinking about?"

me: "review collection for the storefront — post-purchase emails,

display, maybe incentives"

claude: [reads existing storefront code, draws integration diagram]

claude: "Three questions: timing, incentive structure, moderation..."

[back-and-forth conversation, ~10 minutes]

claude: "This feels solid. Want me to start a change?"

me: /opsx:new add-review-collection

claude: [creates change directory, shows proposal template]

me: [describes the change, claude drafts proposal]

me: /opsx:continue

claude: [shows spec template, drafts delta specs based on proposal]

me: [adjusts a requirement, adds a scenario I thought of]

me: /opsx:continue

claude: [drafts design — I use plan mode here to think through

the implementation approach before committing to it]

me: /opsx:continue

claude: [generates TDD-phased tasks from the design]

claude: "All artifacts created. Ready for implementation."

me: /opsx:apply

claude: "Using change: add-review-collection. 8 tasks remaining."

claude: [pre-flight: asks about email timing]

me: "24 hours after delivery confirmation"

claude: [dispatches subagent for test task 1]

claude: "✓ Task 1 complete. Spec compliance: passed. Quality: passed."

...

claude: "8/8 tasks complete. All tests passing."

me: /opsx:verify add-review-collection

claude: [completeness, correctness, wiring, coherence checks]

claude: "All checks passed. Ready for archive."

me: /codex-review add-review-collection

claude: [cross-model review, saves output]

me: /opsx:archive add-review-collection

claude: [syncs delta specs to standing specs, archives]

The whole thing might take a couple of hours for a medium-complexity feature. My active involvement is front-loaded in the explore and artifact phases — maybe 20-30 minutes of real thinking about the design. Once /opsx:apply starts, it's mostly autonomous, with occasional clarification questions and review decisions surfaced to me.

What works and what doesn't

What works:

- The context brief pattern is the single most impactful thing. Keeping the orchestrator thin and letting subagents read full context from disk solves the "orchestrator runs out of context" problem that kills long sessions.

- Structured ambiguity surfacing (QUESTION blocks) catches specification gaps early. Without this, subagents guess — and they guess confidently and wrong.

- Mandatory review passes catch real issues. The "don't trust the self-report" framing is not paranoia — it's responding to observed behavior.

- Session state hooks after compaction prevent the disorientation that used to derail resumed sessions.

- Filesystem-level isolation via orch's bwrap sandboxing is more reliable than instruction-based constraints. If an agent can't see files, it can't modify them.

What doesn't:

- The system adds overhead that isn't worth it for small changes. A typo fix doesn't need a proposal, specs, and design. I skip the workflow for trivial stuff, but the boundary between "trivial" and "worth speccing" is a judgment call I get wrong sometimes.

- Parallel subagent dispatch with worktree isolation works in theory but is fragile in practice. Merge conflicts from parallel branches touching shared files eat the time savings. I mostly dispatch sequentially now.

- The TDD enforcement works mechanically but doesn't prevent shallow tests. A subagent can write a test that technically fails first and technically passes after implementation while testing almost nothing meaningful. The review pass catches some of this, but not all.

- Context pressure warnings help but don't solve the fundamental problem. When the orchestrator is deep in a complex change and the context window is filling up, there's no graceful handoff — you just lose fidelity.

The meta-point

None of this is sophisticated. It's shell scripts, markdown files, a Python CLI, and some bwrap namespacing — held together by explicit instructions to an LLM about how to behave and hard filesystem boundaries for when instructions aren't enough. The "framework" is structured prompting with filesystem state and container isolation.

But it works better than unstructured prompting, and it works better than not using AI tools at all. The uncomfortable middle ground is that AI development tools are genuinely useful and require significant scaffolding to be reliable on anything beyond trivial tasks. The scaffolding is the work — and right now, the scaffolding is something each team or individual has to build for themselves.

I expect the tooling to get better and much of this to become unnecessary. But today, this is what actually shipping features with AI tools on a real codebase looks like for me.